During my years designing learning, I learned that learning doesn’t always happen in a classroom or through a course. It happens in the in-between — in the pauses between decisions, in the reflection after a mistake, and in conversations where experience meets values.

When I developed study texts for banking professionals, I embedded mini case studies and operational reflections drawn from real ethical dilemmas faced by bankers — many shared directly by compliance officers from member banks. These were not theoretical stories. They were lived experiences — moments where professional judgment, integrity, and business decisions intersected.

The impact was visible. Learners began to see ethics not as a topic to be memorised but as a muscle to be exercised daily. They connected learning with the reality of their work — and that’s when it hit me: learning should feel as natural as breathing.

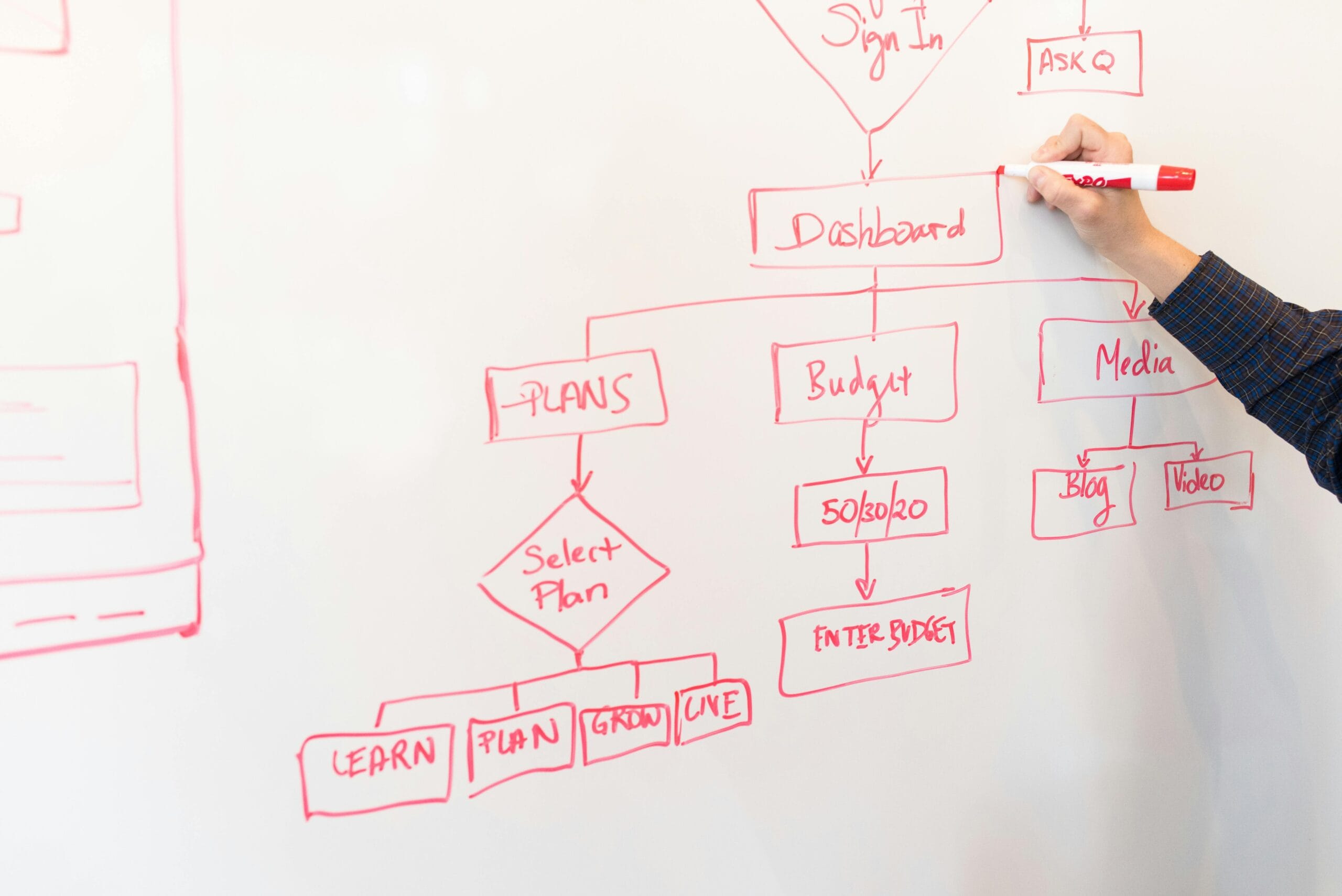

Traditional corporate learning tends to be episodic — built around scheduled programs, annual calendars, or mandatory e-learning completions. But these are merely moments in time. To create sustained capability, organisations need to move from program-based to ecosystem-based learning.

An ecosystem approach ensures learning is embedded in the flow of work. That means:

- Learning content is tied directly to real challenges and business outcomes.

- Knowledge is continuously refreshed through feedback, collaboration, and systems.

- Employees access learning as they work, not after they work.

When learning becomes part of the operational rhythm — when every project, review, or task doubles as a learning opportunity — that’s when it starts to resemble breathing.

Most organisations already have e-learning platforms, but many operate like digital libraries — filled with content no one remembers to visit.

Modern e-learning should function less like a repository and more like an intelligent companion. It should:

- Personalise recommendations based on role, performance data, and learning goals.

- Integrate with daily work tools (like Teams, Slack, or Outlook), so learning comes to the user — not the other way around.

- Measure impact through improved performance or decision-making, not just completion rates.

Imagine this: as a banker prepares a client proposal, their platform automatically recommends a 5-minute refresher on ethical selling practices or a short clip-on managing conflict of interest.

That’s learning in the flow of work — breathing, not forcing.

Adults don’t learn by sitting through long modules; they learn in moments of need. The best learning experiences are designed around those precise moments:

- When applying something new

- When solving a problem

- When facing change

- When seeking to deepen understanding

- When something goes wrong

For example, those mini case studies allowed learners to revisit ethical gray areas in real time as they encountered them in their jobs. They weren’t just reading compliance rules — they were mentally rehearsing judgment calls before they even happened.

Learning that’s designed for moments equips employees to breathe through complexity with awareness and clarity.

If learning is to become as natural as breathing, we must stop measuring it through attendance and completion. The more meaningful question is: What did this learning contribute to the business, to decision-making, or to culture?

When learning outcomes can be traced to business improvement, compliance strength, or enhanced professionalism, the ROI becomes tangible — and learning becomes indispensable.

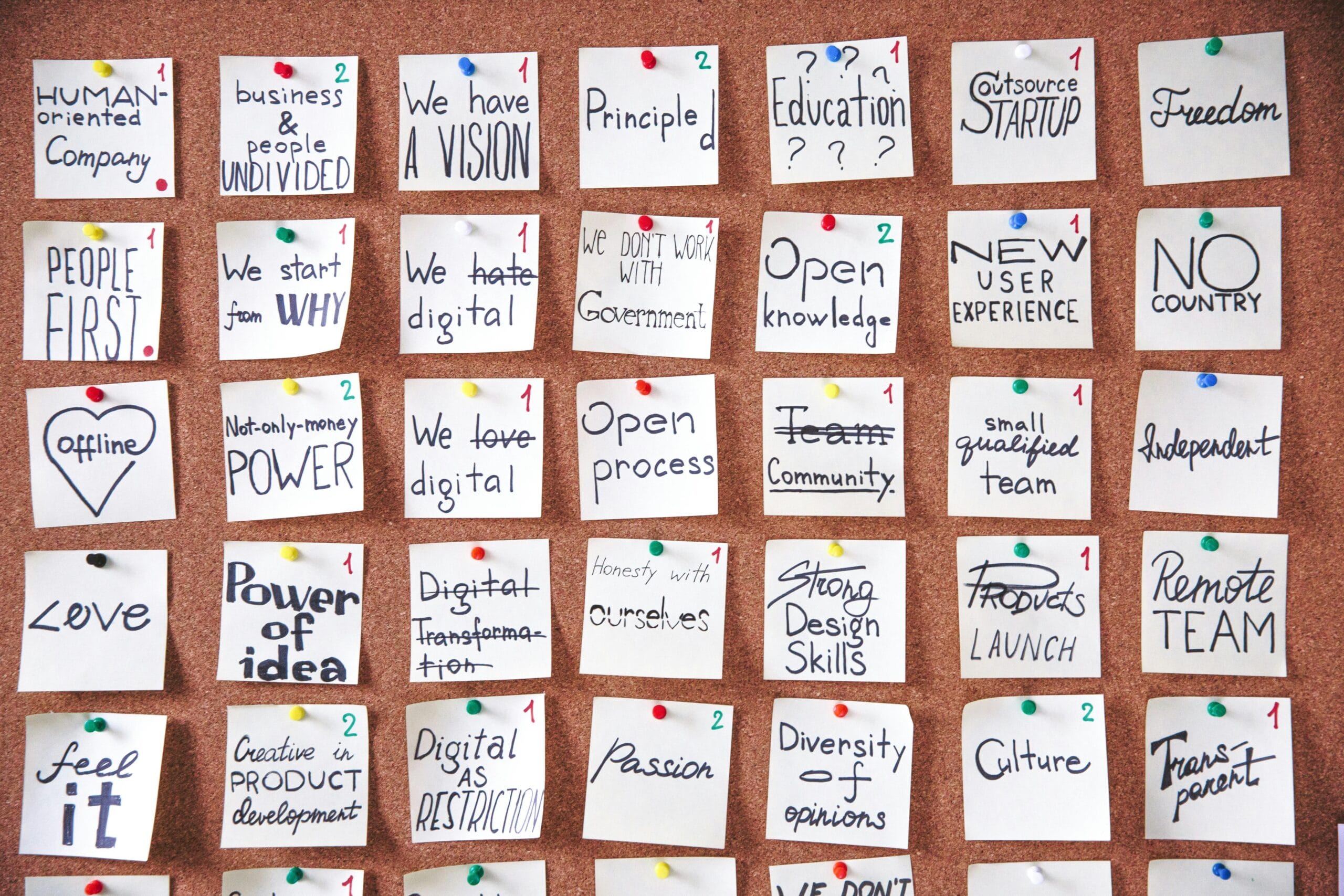

A culture that breathes learning celebrates curiosity. It encourages reflection over rote answers, and inquiry over compliance.

Leaders must set the tone. When leaders share what they’re learning — not just what they know — they model humility and growth. When organisations reward knowledge sharing, experimentation, and reflective dialogue, learning becomes a natural part of the corporate DNA.

Because curiosity fuels innovation, and innovation keeps organisations alive.

Technology scales learning, but humans sustain it.

When learning is positioned as an HR compliance requirement, it loses its power.

When it’s driven by leadership as a business enabler, it transforms.

We can leverage on AI to recommend what to learn next, but only a mentor or coach can help make sense of that learning in context. A discussion circle can turn an e-learning module into a meaningful reflection session. And that discussion must be led but our executives. They must be able to say not just how many employees have completed training, but how many are now capable of driving better outcomes.

Learning that’s aligned with strategy, measured by contribution, and supported by culture — that’s what turns knowledge into oxygen for organisational growth.

Truth is, you don’t need a new platform or a million-ringgit transformation to start embedding learning into your organisation’s DNA. You just need intention, design, and consistency. In my reading on how organisations scale the impact of learning, I noticed a few recurring patterns:

1. They turn everyday work into learning opportunities

I came across organisations like Toyota that institutionalised reflection as part of operational execution. Whether through Kaizen practices or After Action Reviews, learning happens immediately after action—not months later in a classroom.

2. They scale internal knowledge instead of constantly buying external expertise

Companies such as Google demonstrated how peer-led learning models can scale institutional knowledge. Their Googler-to-Googler initiative showed that employees themselves can become powerful learning multipliers.

3. They break learning into smaller, more consumable formats

From what I’ve read, organisations like Deloitte moved towards shorter, bite-sized learning experiences because employees are increasingly time-poor and overwhelmed by long-form training.

4. They bring learning into existing workflows

Microsoft’s ecosystem, particularly Microsoft Viva Learning, reflects a broader shift towards embedding learning into platforms employees already use instead of expecting them to actively search for learning separately.

5. They make managers responsible for sustaining learning culture

I noticed examples such as Adobe where development conversations became part of regular manager check-ins rather than annual HR exercises.

When I reflect on my experience designing learning, the biggest realisation is this: learning doesn’t need to be scheduled — it needs to be lived.

When employees see learning as part of their identity, not just their job description — it becomes instinctive. When systems, tools, and leadership align to support that instinct — learning becomes like breathing: effortless, essential, and invisible, yet sustaining everything.

In a world where work never stops changing, organizations that learn continuously don’t just keep up — they stay alive.

And employees who breathe learning into every decision don’t just perform — they transform.